As usual, you don't need to take notes. The text of my talk with links to the sources will go up at blog.dshr.org after this seminar.

Why Decentralize?

|

| Tweets by language |

The platonic ideal of a decentralized system has many advantages over a centralized one performing the same functions:

- It can be more resilient to failures and attacks.

- It can resist acquisition and the consequent enshittification.

- It can scale better.

- It has the economic advantage that it is hard to compare the total system cost with the benefits it provides because the cost is diffused across many independent budgets.

Why Not Decentralize?

But history shows that this platonic ideal is unachieveable because systems decentralization isn't binary and systems that aim to be at the decentralized end of the spectrum suffer four major problems:- Their advantages come with significant additional monetary and operational costs.

- Their user experience is worse, being more complex, slower and less predictable.

- They are in practice only as decentralized as the least decentralized layer in the stack.

- They exhibit emergent behaviors that drive centralization.

What Does "Decentralization" Mean?

|

| Source |

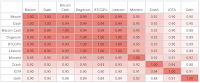

The Nakamoto coefficient is the number of units in a subsystem you need to control 51% of that subsystem.

| Subsystem | Bitcoin | Ethereum |

| Mining | 5 | 3 |

| Client | 1 | 1 |

| Developer | 5 | 2 |

| Exchange | 5 | 5 |

| Node | 3 | 4 |

| Owner | 456 | 72 |

Blockchains exemplify a more rigorous way to assess decentralization; to ask whether a node can join the network autonomously, or whether it must obtain permission to join. If the system is "permissioned" it cannot be decentralized, it is centralized around the permission-granting authority. Truly decentralized systems must be "permissionless". My title is wrong; the talk is mostly about permissionless systems, not about the permssioned systems that claim to be decentralized but clearly aren't.

TCP/IP

|

| IBM Cabling System |

Domain Name System

|

| Source |

The basis of TCP/IP is the end-to-end principle, that to the extent possible network nodes communicate directly with each other, not relying on functions in the infrastructure. So why the need for root servers and IANA? It is because nodes need some way to find each other, and the list of root servers' IP addresses provides a key into the hierarchical structure of DNS.

This illustrates the important point that a system is only as decentralized as the least decentralized layer in its stack.

LOCKSS

Fifteen years on from CMU when Vicky Reich and I started the LOCKSS program we needed a highly resilient system to preserve library materials, so the advantages of decentralization loomed large. In particular, we realized that:

Fifteen years on from CMU when Vicky Reich and I started the LOCKSS program we needed a highly resilient system to preserve library materials, so the advantages of decentralization loomed large. In particular, we realized that:- A centralized system would provide an attractive target for litigation by the publisher oligopoly.

- The paper library system already formed a decentralized, permissionless network.

Why LOCKSS Centralized

- Software monoculture

- Centralized development

- Permissioning ensures funding

- Big publishers hated decentralization

- Although we always paid a lot of attention to the security of LOCKSS boxes, we understood that a software monoculture was vulnerable to software supply chain attacks. So we designed a very simple protocol hoping that there would be multiple implementations. But it turned out that the things that a LOCKSS box needed to do other than handling the protocol were quite complex, so despite our best efforts we ended up with a software monoculture.

- We hoped that by using the BSD open-source license we would create a diverse community of developers, but we over-estimated the expertise and the resources of the library community, so Stanford provided the overwhelming majority of the programming effort.

- The program got started with small grants from Michael Lesk at NSF, then subsequently major grants from the NSF, Sun Microsystems and Don Waters at the Mellon Foundation. But Don was clear that grant funding could not provide the long-term sustainability needed for digital preservation. So he provided a matching grant to fund the transition to being funded by the system's users. This also transitioned the system to being permissioned, as a way to ensure the users paid.

- Although many small and open-access publishers were happy to allow LOCKSS to preserve their content, the oligopoly publishers never were. Eventually they funded a completely closed network of a dozen huge systems at major libraries around the world called CLOCKSS. This is merely the biggest of a number of closed, private LOCKSS networks that were established to serve specific genres of content, such as government documents.

Gossip Protocols

If LOCKSS was to be permissionless there could be no equivalent of DNS, so how did a new node find other nodes to vote with?

A gossip protocol or epidemic protocol is a procedure or process of computer peer-to-peer communication that is based on the way epidemics spread. Some distributed systems use peer-to-peer gossip to ensure that data is disseminated to all members of a group. Some ad-hoc networks have no central registry and the only way to spread common data is to rely on each member to pass it along to their neighbors.

Wikipedia

Suppose you have a decentralized network with thousands of nodes that can join and leave whenever they want, and you want to send a message to all the current nodes. This might be because they are maintaining a shared state, or to ask a question that a subset might be able to answer. You don't want to enumerate the nodes, because it would be costly in time and network traffic, and because the answer might be out-of-date by the time you got it. And even if you did sending messages individually to the thousands of nodes would be expensive. This is what IP multicast was for, but it doesn't work well in practice. So you build multicast on top of IP using a Gossip protocol.

Each node knows a few other nodes. The first time it receives a message it forwards it to them, along with the names of some of the nodes it knows. As the alternate name of "epidemic protocol" suggests, this is a remarkably effective mechanism. All that a new node needs in order to join is for the network to publish a few "bootstrap nodes", similar to the way an Internet node accesses DNS by having the set of root servers wired in. But this bootstrap mechanism is inevitably centralized.

The LOCKSS nodes used a gossip protocol to communicate, so in theory all a library needed to join in was to know another library running a node. In the world of academic libraries this didn't seem like a problem. It turned out that the bootstrap node all the libraries knew was Stanford, the place they got the software and the support. So just like DNS, the root identity was effectively wired-in.

Bitcoin

The network timestamps transactions by hashing them into an ongoing chain of hash-based proof-of-work, forming a record that cannot be changed without redoing the proof-of-work. The longest chain not only serves as proof of the sequence of events witnessed, but proof that it came from the largest pool of CPU power. As long as a majority of CPU power is controlled by nodes that are not cooperating to attack the network, they'll generate the longest chain and outpace attackers.

Satoshi Nakamoto

Fast forward another ten years and Satoshi Nakamoto published Bitcoin: A Peer-to-Peer Electronic Cash System, a ledger implemented as a chain of blocks containing transactions. Like LOCKSS, the system needed a Sybil-proof way to achieve consensus, in his case on the set of transactions in the next block to be added to the chain. Unlike LOCKSS, where nodes voted in single-phase elections, Nakamoto implemented a three-phase selection mechanism:

- One node is selected from the network using Proof-of-Work. It is the first node to guess a nonce that made the hash of the block have the required number of leading zeros.

- The selected node proposes the content of the next block via the gossip network.

- The "longest chain rule", Nakamoto's most important contribution, ensures that the network achieves consensus on the block proposed by the selected node.

Increasing Returns to Scale

|

| Source |

The application to the Bitcoin network starts with this observation. The whole point of the Bitcoin protocol is to make running a miner, a node in the network, costly. The security of the system depends upon making an attack more costly to mount than it would gain. Miners need to defray the costs the system imposes in terms of power, hardware, bandwidth, staff and so on. Thus the protocol rewards miners with newly minted Bitcoin for winning the race for the next block.

Bitcoin Economics

Nakamoto's vision of the network was of many nodes of roughly equal power,"one CPU one vote". This has two scaling problems:- The target block time is 10 minutes, so in a network of 600 equal nodes the average time between rewards is 100 hours, or about 4 days. But in a network of 600,000 equal nodes it is about 4,000 days or about 11 years. In such a network the average node will never gain a reward before it is obsolete.

- Moore's law means that over timescales of years the nodes are not equal, even if they are all CPUs. But shortly after Bitcoin launched, miners figured out that GPUs were much better mining rigs than CPUs, and later that mining ASICs were even better. Thus the miner's investment in hardware has only a short time to return a profit.

|

| Mining Pools 02/27/23 |

The block rewards inflate the currency, currently by about $100M/day. This plus fees that can reach $23M/day, is the cost to run a system that currently processes 400K transactions/day, or over $250 per transaction plus up to $57 per transaction in fees. Lets talk about the excess costs of decentralization!

Like most permissionless networks, Bitcoin nodes communicate using a gossip protocol. So just like LOCKSS boxes, they need to know one or more bootstrap nodes in order to join the network, just like DNS and LOCKSS.

In Bitcoin Core, the canonical Bitcoin implementation, these bootstrap nodes are hard-coded as trusted DNS servers maintained by the core developers.

Haseeb Qureshi, Bitcoin's P2P NetworkThere are also fall-back nodes in case of DNS failure encoded in chainparamsseeds.h:

/** * List of fixed seed nodes for the bitcoin network * AUTOGENERATED by contrib/seeds/generate-seeds.py * * Each line contains a BIP155 serialized (networkID, addr, port) tuple. */

Economies of Scale in Peer-to-Peer Networks

|

| Source |

I suddenly realized that this centralization wasn't something about Bitcoin, or LOCKSS for that matter. It was an inevitable result of economic forces generic to all peer-to-peer systems. So I spent much of the weekend sitting in one of the hotel's luxurious houses writing Economies of Scale in Peer-to-Peer Networks.

My insight was that the need to make an attack expensive wasn't something about Bitcoin, any permissionless peer-to-peer network would have the same need. In each case the lack of a root of trust meant that security was linear in cost, not exponential as with, for example, systems using encryption based upon a certificate authority. Thus any successful decentralized peer-to-peer network would need to reimburse nodes for the costs they incurred. How can the nodes' costs be reimbursed?:

There is no central authority capable of collecting funds from users and distributing them to the miners in proportion to these efforts. Thus miners' reimbursement must be generated organically by the blockchain itself; a permissionless blockchain needs a cryptocurrency to be secure.And thus any successful permissionless network would be subject to the centralizing force of economies of scale.

Cryptocurrencies

|

| ETH miners 11/2/20 |

The fact that the coins ranked 3, 6 and 7 by "market cap" don't even claim to be decentralized shows that decentralization is irrelevant to cryptocurrency users. Numbers 3 and 7 are stablecoins with a combined "market cap" of $134B. The largest stablecoin that claims to be decentralized is DAI, ranked at 24 with a "market cap" of $5B. Launching a new currency by claiming better, more decentralized technology than Bitcoin or Ethereum is pointless, as examples such as Chia, now ranked #182, demonstrate. Users care about liquidity, not about technology.

The holders of coins show a similar concentration, the Gini Coefficients Of Cryptocurrencies are extreme.

Ethereum's Merge

|

| ETH Stakes 05/22/23 |

Ethereum Validators

Time in proof-of-stake Ethereum is divided into slots (12 seconds) and epochs (32 slots). One validator is randomly selected to be a block proposer in every slot. This validator is responsible for creating a new block and sending it out to other nodes on the network. Also in every slot, a committee of validators is randomly chosen, whose votes are used to determine the validity of the block being proposed. Dividing the validator set up into committees is important for keeping the network load manageable. Committees divide up the validator set so that every active validator attests in every epoch, but not in every slot.

Ethereum's consensus mechanism is vastly more complex than Bitcoin's, but it shares the same three-phase structure. In essence, this is how it works. To take part, a node must stake, or escrow, more than a minimum amount of the cryptocurrency,then:PROOF-OF-STAKE (POS)

- A "smart contract" uses a pseudo-random algorithm to select one node and a "committee" of other nodes with probabilities based on the nodes' stakes.

- The one node proposes the content of the next block.

- The "committee" of other validator nodes vote to approve the block, leading to consensus.

Like Bitcoin, the nodes taking part in consensus gain a block reward currently running at $2.75M/day and fees running about $26M/day. This is the cost to run a distributed computer 1/5000 as powerful as a Raspberry Pi.

Validator Centralization

The prospect of a US approval of Ether exchange-traded funds threatens to exacerbate the Ethereum ecosystem’s concentration problem by keeping staked tokens in the hands of a few providers, S&P Global warns.

...

Coinbase Global Inc. is already the second-largest validator ... controlling about 14% of staked Ether. The top provider, Lido, controls 31.7% of the staked tokens,

...

US institutions issuing Ether-staking ETFs are more likely to pick an institutional digital asset custodian, such as Coinbase, while side-stepping decentralized protocols such as Lido. That represents a growing concentration risk if Coinbase takes a significant share of staked ether, the analysts wrote.

Coinbase is already a staking provider for three of the four largest ether-staking ETFs outside the US, they wrote. For the recently approved Bitcoin ETF, Coinbase was the most popular choice of crypto custodian by issuers. The company safekeeps about 90% of the roughly $37 billion in Bitcoin ETF assets, chief executive officer Brian Armstrong said

A system in which those with lots of money make lots more money but those with a little money pay those with a lot, and which has large economies of scale, might be expected to suffer centralization. As the pie-chart shows, this is what happened. In particular, exchanges hold large amounts of Ethereum on behalf of their customers, and they naturally stake it to earn income. The top two validators, the Lido pool and the Coinbase exchange, have 46.1% of the stake, and the top five have 56.7%....

Coinbase Global Inc. is already the second-largest validator ... controlling about 14% of staked Ether. The top provider, Lido, controls 31.7% of the staked tokens,

...

US institutions issuing Ether-staking ETFs are more likely to pick an institutional digital asset custodian, such as Coinbase, while side-stepping decentralized protocols such as Lido. That represents a growing concentration risk if Coinbase takes a significant share of staked ether, the analysts wrote.

Coinbase is already a staking provider for three of the four largest ether-staking ETFs outside the US, they wrote. For the recently approved Bitcoin ETF, Coinbase was the most popular choice of crypto custodian by issuers. The company safekeeps about 90% of the roughly $37 billion in Bitcoin ETF assets, chief executive officer Brian Armstrong said

Yueqi Yang, Ether ETF Applications Spur S&P Warning on Concentration Risks

Producer Centralization

|

| Producers 03/18/24 |

Olga Kharif and Isabelle Lee report that these concentrations are a major focus of the SEC's consideration of Ethereum spot ETFs:

In its solicitations for public comments on the proposed spot Ether ETFs, the SEC asked, “Are there particular features related to ether and its ecosystem, including its proof of stake consensus mechanism and concentration of control or influence by a few individuals or entities, that raise unique concerns about ether’s susceptibility to fraud and manipulation?”

Software Centralization

|

| Source |

A bug in Ethereum's Nethermind client software – used by validators of the blockchain to interact with the network – knocked out a chunk of the chain's key operators on Sunday.The fundamental problem is that most layers in the software stack are highly concentrated, starting with the three operating systems. Network effects and economies of sclae apply at every layer. Remember "no-one ever gets fired for buying IBM"? At the Ethereum layer, it is "no-one ever gets fired using Geth" because, if there was ever a big problem with Geth, the blame would be so widely shared.

...

Nethermind powers around 8% of the validators that operate Ethereum, and this weekend's bug was critical enough to pull those validators offline. ... the Nethermind incident followed a similar outage earlier in January that impacted Besu, the client software behind around 5% of Ethereum's validators.

...

Around 85% of Ethereum's validators are currently powered by Geth, and the recent outages to smaller execution clients have renewed concerns that Geth's dominant market position could pose grave consequences if there were ever issues with its programming.

...

Cygaar cited data from the website execution-diversity.info noting that popular crypto exchanges like Coinbase, Binance and Kraken all rely on Geth to run their staking services. "Users who are staked in protocols that run Geth would lose their ETH" in the event of a critical issue," Cygaar wrote.

The Decentralized Web

One mystery was why venture capitalists like Andreesen Horwitz, normally so insistent on establishing wildly profitable monopolies, were so keen on the idea of a Web 3 implemented as "decentralized apps" (dApps) running on blockchains like Ethereum. Moxie Marlinspike revealed the reason:companies have emerged that sell API access to an ethereum node they run as a service, along with providing analytics, enhanced APIs they’ve built on top of the default ethereum APIs, and access to historical transactions. Which sounds… familiar. At this point, there are basically two companies. Almost all dApps use either Infura or Alchemy in order to interact with the blockchain. In fact, even when you connect a wallet like MetaMask to a dApp, and the dApp interacts with the blockchain via your wallet, MetaMask is just making calls to Infura!Providing a viable user experience when interacting with blockchains is a market with economies of scale and network effects, so it has centralized.

It Isn't About The Technology

What is the centralization that decentralized Web advocates are reacting against? Clearly, it is the domination of the Web by the FANG (Facebook, Amazon, Netflix, Google) and a few other large companies such as the cable oligopoly.These companies came to dominate the Web for economic not technological reasons. The Web, like other technology markets, has very large increasing returns to scale (network effects, duh!). These companies build centralized systems using technology that isn't inherently centralized but which has increasing returns to scale. It is the increasing returns to scale that drive the centralization.

|

| Source |

If a decentralized Web doesn't achieve mass participation, nothing has really changed. If it does, someone will have figured out how to leverage antitrust to enable it. And someone will have designed a technical infrastructure that fit with and built on that discovery, not a technical infrastructure designed to scratch the itches of technologists.I think this is still the situation.

BitTorrent

Seven years ago I wrote:Unless decentralized technologies specifically address the issue of how to avoid increasing returns to scale they will not, of themselves, fix this economic problem. Their increasing returns to scale will drive layering centralized businesses on top of decentralized infrastructure, replicating the problem we face now, just on different infrastructure.

|

| Source |

Blockchains

In 2022 DARPA funded a large team from the Trail of Bits cybersecurity company to publish a report entitled Are Blockchains Decentralized? which conformed to Betteridge's Law by concluding "No":Every widely used blockchain has a privileged set of entities that can modify the semantics of the blockchain to potentially change past transactions.The "privileged set of entities" must at least include the developers and maintainers of the software, because:

The challenge with using a blockchain is that one has to either (a) accept its immutability and trust that its programmers did not introduce a bug, or (b) permit upgradeable contracts or off-chain code that share the same trust issues as a centralized approach.

|

| Source |

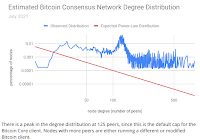

A dense, possibly non-scale-free, subnetwork of Bitcoin nodes appears to be largely responsible for reaching consensus and communicating with miners—the vast majority of nodes do not meaningfully contribute to the health of the network.And second:

Of all Bitcoin traffic, 60% traverses just three ISPs.

|

| Source |

The Ethereum ecosystem has a significant amount of code reuse: 90% of recently deployed Ethereum smart contracts are at least 56% similar to each other.The risk isn't confined to individual ecosystems, it is generic to the entire cryptosphere because, as the chart shows, the code reuse spans across blockchains to such an extent that Ethereum's Geth shares 90% of its code with Bitcoin Core.

Decentralized Finance

|

| Source |

TL;DR: DeFi is neither decentralized, nor very good finance, so regulators should have no qualms about clamping down on it to protect the stability of our financial system and broader economy.DeFi risks and the decentralisation illusion by Sirio Aramonte, Wenqian Huang and Andreas Schrimpf of the Bank for International Settlements similarly conclude:

While the main vision of DeFi’s proponents is intermediation without centralised entities, we argue that some form of centralisation is inevitable. As such, there is a “decentralisation illusion”. First and foremost, centralised governance is needed to take strategic and operational decisions. In addition, some features in DeFi, notably the consensus mechanism, favour a concentration of power.

| Protocol | Revenue | Market |

|---|---|---|

| $M | Share % | |

| Lido | 304 | 55.2 |

| Uniswap V3 | 55 | 10.0 |

| Maker DAO | 48 | 8.7 |

| AAVE V3 | 24 | 4.4 |

| Top 4 | 78.2 | |

| Venus | 18 | 3.3 |

| GMX | 14 | 2.5 |

| Rari Fuse | 14 | 2.5 |

| Rocket Pool | 14 | 2.5 |

| Pancake Swap AMM V3 | 13 | 2.4 |

| Compound V2 | 13 | 2.4 |

| Morpho Aave V2 | 10 | 1.8 |

| Goldfinch | 9 | 1.6 |

| Aura Finance | 8 | 1.5 |

| Yearn Finance | 7 | 1.3 |

| Stargate | 5 | 0.9 |

| Total | 551 |

Based on the [Herfindahl-Hirschman Index], the most competition exists between decentralized finance exchanges, with the top four venues holding about 54% of total market share. Other categories including decentralized derivatives exchanges, DeFi lenders, and liquid staking, are much less competitive. For example, the top four liquid staking projects hold about 90% of total market share in that category,Based on data on 180 days of revenue of DeFI projects from Shen's article, I compiled this table, showing that the top project, Lido, had 55% of the revenue, the top two had 2/3, and the top four projects had 78%. This is clearly a highly concentrated market, typical of cryptocurrency markets in general.

Federation

|

| Source |

How attractive are these advantages? The first bar chart shows worldwide web traffic to social media sites. Every single one of these sites is centralized, even the barely visible ones like Nextdoor. Note that Meta owns 3 of the top 4, with about 5 times the traffic of Twitter.

|

| Source |

That leaves Mastodon with a total of 1.8 million monthly active users at present, an increase of 5% month-over-month and 10,000 servers, up 12%In terms of monthly active users, Twitter claims 528M, Threads claims 130M, Bluesky claims 5.2M and Mastodon claims 1.8M. Note that the only federate-able one with significant market share is owned by the company that owns 3 of the top 4 centralized systems. Facebook claims 3,000M MAU, Instagram claims 2,000M MAU, and WhatsApp claims 2,000M MAU. Thus Threads is about 3% of Facebook alone, so not significant in Meta's overall business. It may be early days yet, but federated social media have a long way to go before they have significant market share.

Summary

Radia Perlman's answer to the question of what exactly you get in return for the decentralization provided by the enormous resource cost of blockchain technologies is:a ledger agreed upon by consensus of thousands of anonymous entities, none of which can be held responsible or be shut down by some malevolent governmentThis is what the blockchain advocates want you to think, but as Vitalik Buterin, inventor of Ethereum pointed out in The Meaning of Decentralization:

In the case of blockchain protocols, the mathematical and economic reasoning behind the safety of the consensus often relies crucially on the uncoordinated choice model, or the assumption that the game consists of many small actors that make decisions independently. If any one actor gets more than 1/3 of the mining power in a proof of work system, they can gain outsized profits by selfish-mining. However, can we really say that the uncoordinated choice model is realistic when 90% of the Bitcoin network’s mining power is well-coordinated enough to show up together at the same conference?As we have seen, in practice it just isn't true that "the game consists of many small actors that make decisions independently" or "thousands of anonymous entities". Even if you could prove that there were "thousands of anonymous entities", there would be no way to prove that they were making "decisions independently". One of the advantages of decentralization that Buterin claims is:

it is much harder for participants in decentralized systems to collude to act in ways that benefit them at the expense of other participants, whereas the leaderships of corporations and governments collude in ways that benefit themselves but harm less well-coordinated citizens, customers, employees and the general public all the time.But this is only the case if in fact "the game consists of many small actors that make decisions independently" and they are "anonymous entities" so that it is hard for the leader of a conspiracy to find conspirators to recruit via off-chain communication. Alas, the last part isn't true for blockchains like Ethereum that support "smart contracts", as Philip Daian et al's On-Chain Vote Buying and the Rise of Dark DAOs shows that "smart contracts" also provide for untraceable on-chain collusion in which the parties are mutually pseudonymous.

Questions

If we want the advantages of permissionless, decentralized systems in the real world, we need answers to these questions:- What is a viable business model for participation that has decreasing returns to scale?

- How can Sybil attacks be prevented other than by imposing massive costs?

- How can collusion between supposedly independent nodes be prevented?

- What software development and deployment model prevents a monoculture emerging?

- Does federation provide the upsides of decentralization without the downsides?

Pac Finance is a fork of the Aave "decentralized finance" protocol. Adam James reports that $26 million in 'unnecessary liquidations' hit Blast-based lender Pac Finance. Why did these liquidations happen?

ReplyDelete"Pac Finance posted on X that it is aware of the issue and is in contact with impacted users. It also claims to be "actively developing a plan with them to mitigate the issue."

"In our effort to adjust the LTV, we tasked a smart contract engineer to make the necessary changes," the platform explained. "However, it was discovered that the liquidation threshold was altered unexpectedly without prior notification to our team, leading to the current issue."

"Going forward, we will set up a governance contract/timelock and forum for all future upgrades to ensure that discussions are planned ahead of time," it added."

A system where an organization can task a single "smart contract engineer" to change the protocol is obviously not decentralized.

I waited too long to post my comment and got a 403, Forbidden, which ate it. I'd only saved part of it so am restoring what I can.

ReplyDeleteI'm guessing that with the map you intended your audience to think "decentralization is better", and then went on to demolish this assumption with respect to crypto in your talk. I hope you used a pointer or otherwise highlighted the decentralized and centralized countries. Because reading it here on the blog it kind of derailed me onto a tangent trying to figure it out as well as your intent by including it.

"WhatsApp claims 2,000 MAU." - Forgot an M?

Tardigrade, thanks for the correction.

ReplyDeleteAs usual, Molly White's We can have a different web is beautifully written, an extended analogy with gardens. She is right that "we" can have a different Web, for some definition of "we".

ReplyDeleteShe asked people on Twitter and Mastodon how old they were during the "good old days" of the Web; most were under 20 and only 4% or less were over 40:

"It may be that we are, at least in part, yearning for the days when logging on to the internet was less likely to mean going to work or paying a bill and more likely to mean playing Runescape or hoping that the green circle on AIM would light up next to the name of our crush after school."

I was in my 50s in the "good old days", and I had already given up "social media" when the signal to noise ratio in the Usenet newsgroups to which I subscribed dropped too low. So I think White was sampling from a skewed population.

I'm someone who lives in a different Web minimizing my use of the "platforms". I do use Google's Blogger and Gmail, but every month I use Google Takeout to download everything I have created there. I could re-create this blog from the downloads, and transfer the mail to the personal mail server I run. I don't post or look at a timeline on Twitter, but I do regularly check a select list of open source intelligence accounts there for news of the war in Ukraine. Unlike most, I can get away with this because I am retired, have no need to monetize my Web presence, and have a circle of long-time friends who communicate via email.

I think White's use of "can" in her title is true but misleading. She should have written "could". In practice only a very select minority like me "can" have a different Web. Most people "could" have a different Web subject to an "if" that White doesn't explain.

My attempt to explain the "if" was in 2018, when I wrote It Isn't About The Technology, arguing that the problem is economic, not technical. White is right that we have all the technical tools we need, we don't know how to solve the economic problems of economies of scale and network effects.