| DEC 340 display |

A couple of years later I started life as a Cambridge undergraduate and encountered computer graphics on a PDP-7 with a DEC 340 display. Just before that, one of the smartest people I ever ended up meeting co-authored a paper on computer graphics entitled On the Design of Display Processors. (also here). Ivan Sutherland introduced the Wheel of Reincarnation as applied to graphics hardware; the idea that the hardware design was cyclical, oscillating between a fixed-function I/O peripheral that over time grew into a programmable I/O processor that over time grew into a fully-functional computer driving a fixed-function display.

|

| Virtua Fighter on NV1 |

| GeForce GTX280 |

The wheel also applies to graphics and user interface software. The early window systems, such as SunView and the Andrew window system, were libraries of fixed functions, as was the X Window System. James Gosling and I tried to move a half-turn around the wheel with NeWS, which was a user interface environment fully programmable in PostScript, but we were premature.

|

| news.bbc.co.uk 12/1/98 |

|

| Visiting the New York Times |

What drives technology around the wheel is the bandwidth and latency of communication. You want to have high bandwidth and low latency between the display and the nearest programmable computer. Despite some recent attempts, such as NVIDIA's Gaming-as-a-Service, I think it will be a long time before the Web moves the next half-turn around the wheel.

Kris and I organized a workshop last year to look at the problems the evolution of the Web poses for attempts to collect and preserve it. Here is the list of problem areas the workshop identified:

- Database driven features & functions

- Complex/variable URI formats & inconsistent/variable link implementations

- Dynamically generated, ever changing, URIs

- Rich Media

- Scripted, incremental display & page loading mechanisms

- Scripted, HTML forms

- Multi-sourced, embedded material

- Dynamic login/auth services: captchas, cross-site/social authentication, & user-sensitive embeds

- Alternate display based on user agent or other parameters

- Exclusions by convention

- Exclusions by design

- Server side scripts & remote procedure calls

- HTML5 "web sockets"

- Mobile publishing

|

| Not an article from Graft |

|

| An article from Graft |

When someone says HTML, you tend to think of a page like this, which looks like vanilla HTML but is actually an HTML5 geolocation demo. Most of what you actually get from Web servers now is programs, like the nearly 12KB of Javascript included by the three lines near the top.

This is the shortest of the three files. A crawler collecting the page can't just scan the content to find the links to the next content it needs to request. It has to execute the program to find the links, which raises all sorts of issues.

The program may be malicious, so its execution needs to be carefully sandboxed. Even if it doesn't intend to be malicious, its execution will take an unpredictable amount of time, which can amount to a denial-of-service attack on the crawler. How many of you have encountered Web pages that froze your browser? Executing may not be slow enough to amount to an attack, but it will be a lot more expensive than simply scanning for links. Ingesting the content just got a lot more expensive in compute terms, which doesn't help the whole economic sustainability is job #1 issue.

It is easy to say, execute the content. But the execution of the content depends on the inputs the program obtains, in this case the geolocation of the crawler and the weather there at the time of the crawl. In general these inputs depend on, for example, the set of cookies and the contents of HTML5's local storage in the "browser" the crawler is emulating, the state of all the external services and databases the program may call upon, and the user's inputs. So the crawler has not merely to run the program, it has to emulate a user mousing and clicking everywhere in the page in search of behaviors that trigger new links.

But we're not just finding links for its own sake, we want to preserve those links and the content they point to for future dissemination. If we preserve the programs for future re-execution we also have to preserve some of their inputs, such as the responses to database queries, and supply those responses at the appropriate times during the re-execution of the program. Other inputs, such as mouse movements and clicks, have to be left to the future reader to supply. This is very tricky, including as it does issues such as faking secure connections.

Re-executing the program in the future is a very fragile endeavour. This isn't because the Javascript virtual machine will have become obsolete. It is well-supported by an open source stack. It is because it is very difficult to be sure which are the significant inputs you need to capture, preserve, and re-supply. A trivial example is a Javascript program that displays the date. Is the correct preserved behavior to display the date when it was ingested, to preserve the original user experience? Or is it to display the date when it will be re-executed, to preserve the original functionality? There's no right answer.

Among the projects exploring the problems of preserving executable objects are:

- Olive at C-MU, which is preserving virtual machines containing the executable object, but not I believe their inputs.

- The EU-funded Workflow 4Ever project, which is trying to encapsulate scientific workflows and adequate metadata for their later re-use into Research Objects. The metadata includes sample datasets and the corresponding results, so that correct preservation can be demonstrated. Generating the metadata for re-execution of a significant workflow is a major effort (PDF).

An alternative that preserves the user experience but not the functionality is, in effect, to push the system the next half-turn around the wheel, reducing the content to fixed-function primitives. Not to try to re-execute the program but to try to re-render the result of execution. The YouTube of Virtua Fighter is a simple example of this kind. It may be the best that can be done to cope with the special complexities of video games.

In the re-render approach, as the crawler executed the program it would record the display as a "video", and build a map of its sensitive areas with the results of activating each of them encoded as another "video" with its own map of sensitive areas.

You can think of this as a form of pre-emptive format migration, an approach that both Jeff Rothenberg and I have argued against for a long time. As with games, it may be that this, while flawed, is the best we can do with the programs on the Web we have.

|

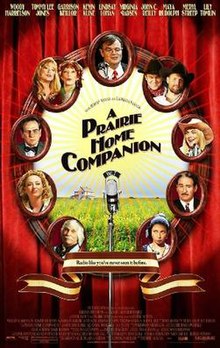

| A Prairie Home Companion |

|

| The Who Sell Out |

The programs that run in your browser these days also ensure that every visit to a web page is a unique experience, full of up-to-the-second personalized content. The challenge of preserving the Web is like that of preserving theatre or dance. Every performance is a unique and unrepeatable interaction between the performers, in this case a vast collection of dynamically changing databases, and the audience. Actually, it is even worse. Preserving the Web is like preserving a dance performed billions of times, each time for an audience of one, who is also the director of their individual performance.

We need to ask what we're trying to achieve by preserving Web content. We haven't managed to preserve everything so far, and we can expect to preserve even less going forward. There are a range of different goals:

- At one extreme, the Internet Archive's Wayback Machine tries to preserve samples of the whole Web. A series of samples of the Web through time turns out to be an incredibly valuable resource. In the early days the unreliability of HTTP and the simplicity of the content meant that the sample was fairly random; the difficulties caused by the evolution of the Web mean a gradually increasing systematic bias.

- The LOCKSS Program, at the other extreme, samples in another way. It tries to preserve everything about a carefully selected set of Web pages, mostly academic journals. The sample is created by librarians selecting journals; it is a sample because we don't have the resources to preserve every academic journal even if there was an agreed definition of that term. Again, the evolution of the Web is making this job gradually more and more difficult.

There are two pieces of good news here. First, INA's archive proxy allows each archive to mix-and-match multiple crawlers in a unified collection process. Second, at least in principle, Memento now allows a unified view across all Web archives. Even though most of them use the same crawler, they may do so in different ways. Thus, combining different approaches should "just work" to provide much more complete preservation of the Web than any individual archive, even the Internet Archive, can achieve on its own.

I pointed to NVIDIA's "gaming as a service" above. Mozilla just announced the generalized form of this, a Javascript codec that runs in your browser and can deliver 1080p 60fps video using only web-standard technology, no plugins. This is the kind of I predicted nearly 2 years ago.

ReplyDeleteLater the same day, 5 stories apart on Slashdot, we find two more examples of the trend to run code in your browser.

ReplyDeleteFirst "The Turbulenz HTML5 games engine has been released as open source under the MIT license. The engine is a full 3D engine written in TypeScript and using WebGL.

This engine can be seen in action in this video from a year ago.

Second, "Mozilla and Epic (of Epic Megagames fame) have engineered an impressive First Person OpenGL demo which runs on HTML5 and a subset of JavaScript. Emscripten, the tool used, converts C and C++ code into 'low level' JavaScript. According to Epic, The Citadel demo runs 'within 2x of native speeds' and supports features commonly found in native OpenGL games such as dynamic specular lighting and global illumination.

I ran this demo on my Asus eeebox running Ubuntu 12.04 and Firefox. It takes a long time to prepare the JavaScript and download the more than 50MB of data for off-line use. The fullscreen HD graphics were really impressive but the eeebox isn't a brawny gaming machine, it could only manage 16fps. Still, 16fps HD on a fairly wimpy machine inside the browser shows the way things are going.

Along the same lines of "stuff that runs in your browser that'll be hard to preserve", we have phone calls.

ReplyDelete